Adapting prompts across LLMs

Prompt adaptation is in private betaPlease reach out to us if you want to test it out!

Migrating applications from one LLM to another requires extensive, tedious prompt engineering to avoid performance degradation. Not Diamond can help you automatically adapt your prompts from the original model to a new target LLM.

In order to adapt prompts from one model to another, you will need the following:

- A Not Diamond API key.

- Your original prompt

- An evaluation dataset and metric for measuring the quality of LLM responses

- A list of target models you want to adapt to

The example below shows how we can adapt a RAG workflow originally built for OpenAI's GPT-4o on the hotpotqa dataset, to work optimally on Anthropic's Claude 4 Sonnet.

Setup

First, we will download hotpotqa dataset.

wget "https://drive.google.com/uc?export=download&id=1TeXM_Z-F3-o6ouooigaEWT3axUJA65kv" -O hotpotqa.jsonlThen install pandas dependency

pip install pandas

Minimum of 25 samples requiredPrompt adaptation currently requires at least 25 training samples to work effectively. You can submit up to 200 samples for a given request. Note that larger datasets will result in longer job times.

Next, we define the system prompt and prompt template for our current workflow.

system_prompt = """I'd like for you to answer questions about a context text that will be provided. I'll give you a pair

with the form:

Context: "context text"

Question: "a question about the context"

Generate an explicit answer to the question that will be output. Make sure that the answer is the only output you provide,

and the analysis of the context should be kept to yourself. Answer directly and do not prefix the answer with anything such as

"Answer:" nor "The answer is:". The answer has to be the only output you explicitly provide.

The answer has to be as short, direct, and concise as possible. If the answer to the question can not be obtained from the provided

context paragraph, output "UNANSWERABLE". Here's the context and question for you to reason about and answer.

"""

prompt_template = """

Context: {context}

Question: {question}

"""Finally, we'll define some helper functions to help us call the adaptation APIs.

SDK support coming soonPrompt adaptation support in our Python SDK is coming soon. Please contact us if you would like to test this new SDK feature.

from typing import List, Dict, Any, Optional

import requests

import json

import pandas as pd

def request_prompt_adaptation(

system_prompt: str,

prompt_template: str,

fields: List[str],

train_data: List[Dict[str, str | Dict[str, str]]],

origin_model: Dict[str, str],

target_models: List[Dict[str, str]],

notdiamond_api_key: str,

evaluation_metric: Optional[str] = None,

evaluation_config: Optional[Dict] = None,

request_url: str = "https://api.notdiamond.ai/v2/prompt/adapt"

) -> str:

"""

Helper method to call the prompt adaptation endpoint

"""

if (evaluation_metric and evaluation_config) or (not evaluation_metric and not evaluation_config):

raise ValueError("Either evaluation_metric or evaluation_config must be provided, but not both or neither.")

request_body = {

"system_prompt": system_prompt,

"template": prompt_template,

"fields": fields,

"goldens": train_data,

"origin_model": origin_model,

"target_models": target_models,

}

if evaluation_metric:

request_body["evaluation_metric"] = evaluation_metric

if evaluation_config:

request_body["evaluation_config"] = json.dumps(evaluation_config)

headers = {

"Authorization": f"Bearer {notdiamond_api_key}",

"content-type": "application/json"

}

resp = requests.post(

request_url,

headers=headers,

json=request_body

)

if resp.status_code == 200:

response = resp.json()

return response["adaptation_run_id"]

else:

raise Exception(

f"Request to adapt prompt failed with code {resp.status_code}: {resp.text}"

)

def get_prompt_adaptation_results(

adaptation_run_id: str,

notdiamond_api_key: str,

base_url: str = "https://api.notdiamond.ai"

) -> Dict[str, Any]:

"""

Helper method to get the results of a prompt adaptation request.

Calls: {base_url}/v2/prompt/adaptResults/{adaptation_run_id}

Example: https://api.notdiamond.ai/v2/prompt/adaptResults/00000000-0000-0000-0000-000000000000

"""

headers = {

"Authorization": f"Bearer {notdiamond_api_key}",

"content-type": "application/json"

}

# Construct the full endpoint URL

endpoint_url = f"{base_url}/v2/prompt/adaptResults/{adaptation_run_id}"

resp = requests.get(endpoint_url, headers=headers)

if resp.status_code == 200:

response = resp.json()

return response

else:

raise Exception(

f"Requesting prompt adaptation result failed with code {resp.status_code}: {resp.text}"

)

def load_json_dataset(dataset_path: str, n_samples: int = 200) -> tuple[list[str], list[dict]]:

df = pd.read_json(dataset_path, lines=True)

n_samples = min(n_samples, len(df))

if n_samples:

df = df.iloc[[v for v in range(n_samples)]]

fields: list[str] = ["question", "context"]

golden_dataset = []

for idx, row in df.iterrows():

sample_fields = {"question": row["question"]}

sample_fields["context"] = "\n\n".join(row["documents"])

answer = row["response"]

data_sample = dict(

fields=sample_fields,

answer=answer,

)

golden_dataset.append(data_sample)

return fields, golden_datasetEvaluation metrics

Our prompt adaptation tool will optimize prompts against one of several possible metric parameters.

LLM-judged metrics

"LLMaaJ:Sem_Sim_1": This metric uses an LLM-as-a-judge to evaluate the semantic similarity of the model response relative to the target golden and outputs a binary score (0 or 1) depending on whether or not the two answers are semantically similar or not. This is the default metric.

The prompt for this metric can be found below. By default, we useopenai/gpt-4o-2024-08-06 as the judge.

Given the predicted answer and reference answer, compare them and check whether they mean the same.

Following are the given answers:

Predicted Answer: {predicted_answer}

Reference Answer: {gt_answer}

On a NEW LINE, give a score of 1 if the answers mean the same, and 0 if they differ, and nothing more.Matching metrics

"JSON_Match": This metric determines if the LLM's JSON output matches the golden JSON output. We computeprecision,recall, andf1scores based on individual fields in the JSON and averaged across all samples in the dataset. This is useful for applications that require structured outputs.

More evaluation metrics coming soonIf you need to support a custom evaluation metric, please reach out to us and we will onboard it for you.

Custom LLM-as-a-judge metrics

If you have a custom LLM-as-a-judge metric you would like to use, you can specify an "evaluation_config" instead of an "evaluation_metric". The "evaluation_config" should consist the following

llm_judging_prompt: The custom prompt for the LLM judge. The prompt must contain a{question}and an{answer}field. The{question}field is used to insert the formatted prompts from your dataset and the{answer}field is used to insert the LLM's response to the question.llm_judge: The LLM judge to use for evaluation, in"provider/model"format. The list of supported LLMs can be found below in Supported models.correctness_cutoff: The cutoff score above which the response is deemed correct. For example, if the judging score is from 1 to 10, you might set the cutoff at 8.

An example of this is shown below

evaluation_config = {

"llm_judging_prompt": (

"Does the assistant's answer properly answer the user's question? question: {question} answer: {answer} "

"Score a 1 if the answer is correct, 0 otherwise. Do not output any other values or text - only the score."

),

"llm_judge": "openai/gpt-4o-2024-11-20",

"correctness_cutoff": 0,

}Request prompt adaptation

First we will format the dataset for prompt adaptation. Not Diamond expects a list of samples consisting of prompt template field arguments, so ensure that prompt_template.format(sample['fields']) returns a valid user prompt for each sample.

fields, pa_ds = load_json_dataset("hotpotqa.jsonl", 25)

print(prompt_template.format(**pa_ds[0]['fields']))Next, specify the origin_model which you query with the current system prompt and prompt template; and your target_models, which you would like to query with adapted prompts. You can list multiple target models.

origin_model = {"provider": "google", "model": "gemini-2.5-pro"}

target_models = [

{"provider": "anthropic", "model": "claude-sonnet-4-5-20250929"},

]

Use multiple target models for the best resultsOur prompt adaptation API allows you to define up to 4 target models. If you're unsure about which model you should migrate to, defining multiple target models lets you see at a glance which model is best suited for your data.

Supported modelsPrompt adaptation currently only supports adapting prompts to the following target models:

openai/gpt-4o-2024-08-06

openai/gpt-4o-2024-11-20

openai/gpt-4o-mini-2024-07-18

openai/gpt-4.1-2025-04-14

openai/gpt-4.1-mini-2025-04-14

openai/gpt-4.1-nano-2025-04-14

openai/gpt-5-2025-08-07

openai/gpt-5-mini-2025-08-07

openai/gpt-5.1-2025-11-13

anthropic/claude-sonnet-4-5-20250929

anthropic/claude-3-7-sonnet-20250219

anthropic/claude-sonnet-4-20250514

anthropic/claude-opus-4-20250514

anthropic/claude-opus-4-5-20251101

google/gemini-2.5-flash

google/gemini-2.5-pro

google/gemini-3-pro-preview

mistral/mistral-large-2411

qwen/qwen3-14b

qwen/qwen3-32b

qwen/qwen3-235b-a22b

meta-llama/llama-3.1-8b-instruct

meta-llama/llama-3.1-70b-instruct

meta-llama/llama-3.1-405b-instruct

moonshotai/kimi-k2-thinking

openrouter/gpt-oss-120b

openrouter/llama-3.3-70b-instruct

Finally, call the API with your NOTDIAMOND_API_KEY and the adaptation request will be submitted to Not Diamond's servers. You will get back a prompt_adaptation_id.

Concurrent job limitsPrompt adaptation is still in beta. To accommodate LLM provider service limitations, we have a job concurrency limit of 1 job per user. It is fine for a single job to include multiple target models. We are working to improve the capacity and will work to increase this limit in the near future.

prompt_adaptation_id = request_prompt_adaptation(

system_prompt=system_prompt,

prompt_template=prompt_template,

fields=fields,

train_data=pa_ds,

origin_model=origin_model,

target_models=target_models,

evaluation_metric="LLMaaJ:Sem_Sim_1",

notdiamond_api_key="YOUR_NOTDIAMOND_API_KEY"

)

print(prompt_adaptation_id)prompt_adaptation_id = request_prompt_adaptation(

system_prompt=system_prompt,

prompt_template=prompt_template,

fields=fields,

train_data=pa_ds,

origin_model=origin_model,

target_models=target_models,

evaluation_config=evaluation_config,

notdiamond_api_key="YOUR_NOTDIAMOND_API_KEY"

)Request status

Get adapted prompt and evaluation results

Once the prompt adaptation request is completed, you can request the results of the optimization using the same prompt_adaptation_id.

results = get_prompt_adaptation_results(prompt_adaptation_id, "YOUR_NOTDIAMOND_API_KEY")

print(results)The response will return a dictionary with the following fields:

{

"id": "uuid", // The prompt adaptation id

"created_at": "datetime", // Timestamp

"origin_model": {

"model_name": "openai/gpt-4o-2024-11-20", // The original model the prompt was designed for

"score": 0.8, // The original model's score on the dataset before optimization

"evals": {"LLMaaJ:Sem_Sim_1": 0.8}, // The original model's evaluation results on the dataset

"system_prompt": "...", // The baseline system prompt submitted

"user_message_template": "...", // The baseline prompt template submitted

"result_status": "completed"

},

"target_models": [

{

"model_name": "anthropic/claude-sonnet-4-5", // The original model the prompt was designed for

"pre_optimization_score": 0.64, // The target model's score on the dataset before optimization

"pre_optimization_evals": {"LLMaaJ:Sem_Sim_1": 0.64}, // The target model's evaluation results on the dataset before optimization

"post_optimization_score": 0.8, // The target model's score on the dataset after optimization

"post_optimization_evals": {"LLMaaJ:Sem_Sim_1": 0.8}, // The targe model's evaluation results on the dataset after optimization

"system_prompt": "...", // The baseline system prompt submitted

"user_message_template": "...", // The baseline prompt template submitted

"user_message_template_fields": ["..."], // Field arguments in the user_message_template

"result_status": "completed"

}

],

}result_status can have one of the following statuses:

created: the optimization job has been received.queued: the optimization job is currently in queue to be processed.processing: the optimization job is currently running. Evaluation scores will benulluntil the job iscompleted.completed: the optimization job is finished and you will see the evaluation scores populated.failed: the optimization job failed, please try again or contact support.

Each model in target_models will have its own results dictionary. If an adaptation failed for a specific target model, please try again or contact support.

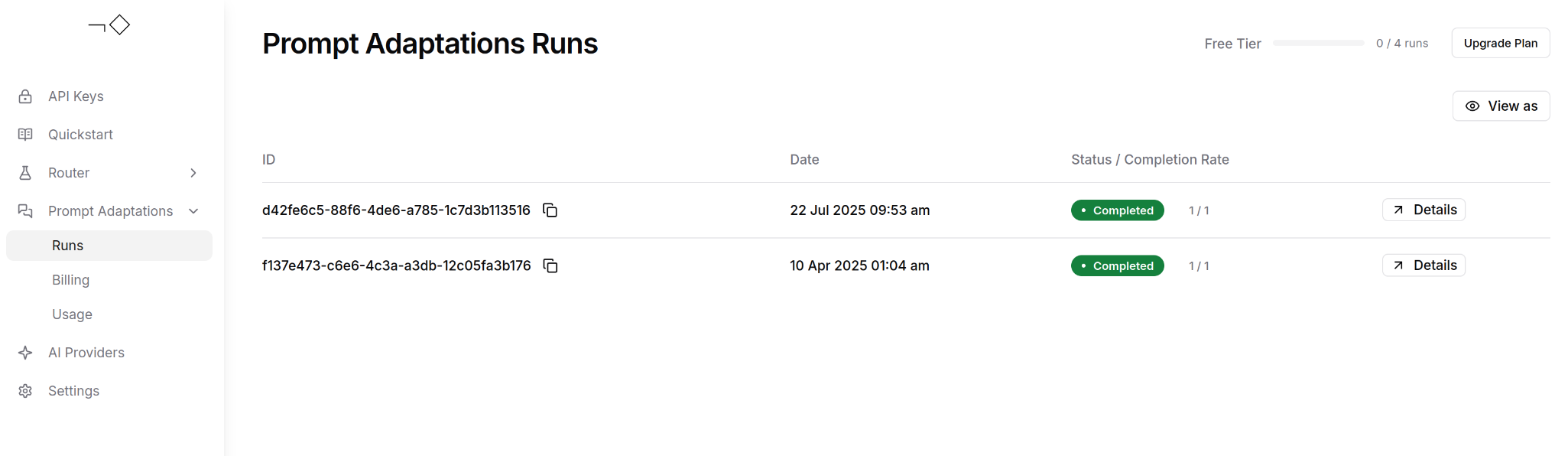

View your prompt adaptation requests and results

You can also use the dashboard to view your runs, their status, and copy the optimized prompts directly.

Updated 3 days ago